LED displays once had a comfortable predictability. The industry lived in the rhythm of refresh rates, pixel pitch debates, and the slow grind of firmware upgrades. Color calibration meant tedious human oversight, panels were heavy and modular, and content pipelines felt static. That world is still visible in the corner of many control rooms, but it’s become a ghost of its former certainty. AI didn’t just improve LED displays; it rewrote their internal logic.

The shift didn’t announce itself with a single headline. There was no “AI arrives in display tech” banner. Instead, it crept in through algorithms that optimized brightness based on environmental feedback, or auto-tuned gamma curves in real-time. For once, displays stopped behaving like stubborn machines and started behaving like attentive collaborators. The distinction is subtle but profound. A wall of LEDs no longer merely reflects what it’s fed—it interprets. Patterns emerge in ways even their designers hadn’t scripted.

A New Kind of Calibration

Calibration has always been a sacrament. Historically, teams would spend hours or days with photometers and colorimeters, adjusting panels until the output looked right in a controlled room. Outdoor displays were a nightmare—sunlight was never constant, and reflective surfaces introduced chaos. Enter AI. Neural networks trained on thousands of environmental scenarios now make decisions in microseconds. A sunny afternoon no longer requires manual intervention; the display senses and compensates. Shadows stretch across a building, and LEDs adjust to preserve contrast without flattening the vibrancy. There’s a subtle artistry here, but it’s computational.

What’s striking is that the AI doesn’t replace the engineer—it amplifies their reach. Panels can be deployed in more ambitious configurations, knowing the underlying system will handle variability automatically. It’s tempting to credit the hardware, but the panels themselves are now secondary to the intelligence orchestrating them. One could put a mediocre LED wall in a prime location and watch it outperform a technically superior system lacking adaptive algorithms. Performance isn’t just about physics anymore; it’s about pattern recognition and prediction.

Content That Anticipates

Content creation pipelines have always been constrained by display limitations. Pixel density dictated how much detail could survive translation from design to execution. High-resolution visuals were often compressed into mediocrity to fit the hardware. Now, AI models can remap content dynamically. Scaling, anti-aliasing, and color adaptation happen on the fly. There’s an invisible curator at work—pixels that would have been lost or muddied in translation are restored in ways that feel intuitive.

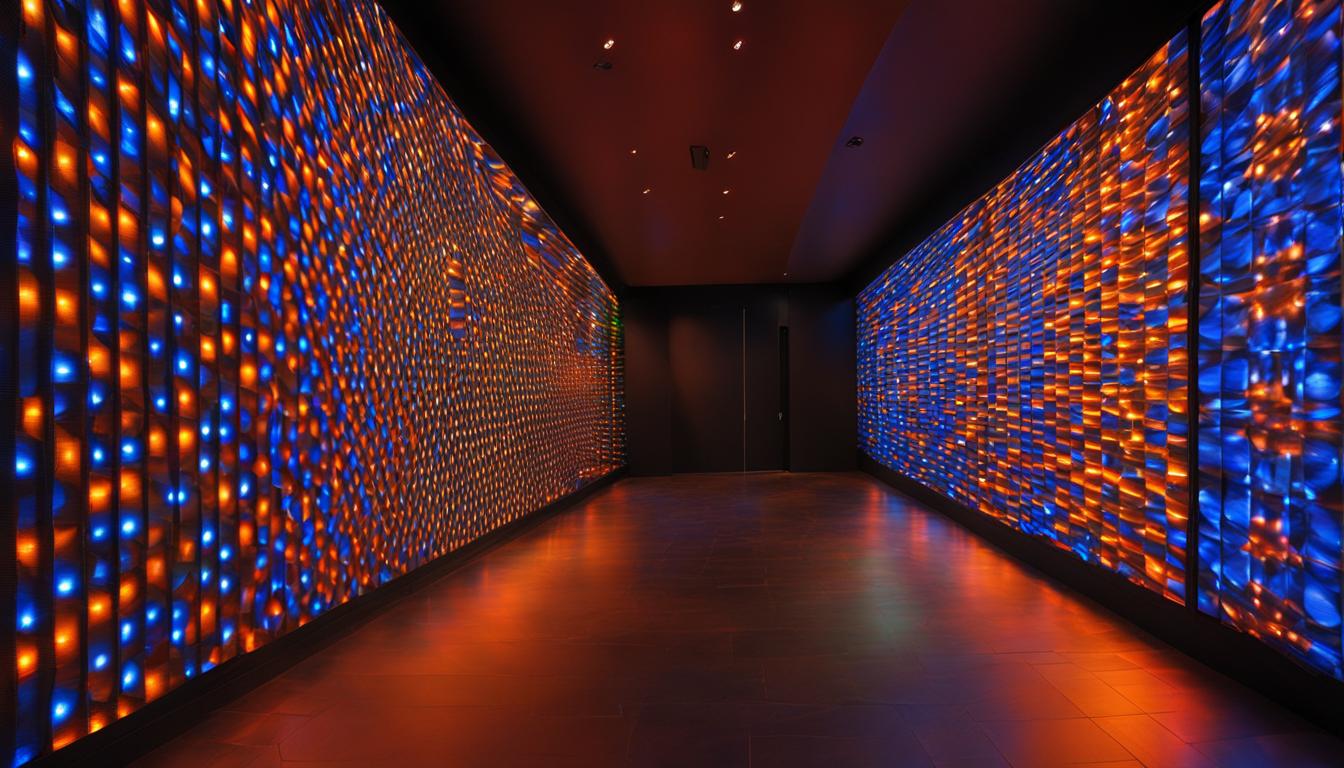

The result is a strange sort of intimacy. Walking past a cityscape installation, it’s easy to forget that the display is a grid of LEDs. AI has smoothed the inherent discretization, predicting where human eyes will notice and where they won’t. There’s no formula for this; every frame is contextually reinterpreted. It’s reminiscent of compression artifacts that vanish not because they’re removed, but because the system knows what the observer is likely to perceive. It’s content that adapts, rather than content that endures.

Hardware’s Quiet Upgrade

There’s a misconception that AI is purely software. While it’s true that neural networks dominate the narrative, the hardware ecosystem has quietly responded. Microcontrollers have gained enough edge to run lightweight inference directly at the panel level. Thermal sensors feed streams of data to predictive models, which then adjust not just brightness but voltage distribution to avoid hot spots. Refresh rates fluctuate subtly to minimize perceptible flicker under unusual frame sequences.

These improvements are under the hood, rarely celebrated, but their cumulative effect is noticeable. A display that once needed quarterly maintenance now runs indefinitely without perceptible drift. Failures no longer appear as sudden outages but as subtle deviations that the system auto-corrects. LED panels have become slightly sentient in their resilience—not in a science-fiction sense, but in the way they monitor, anticipate, and self-adjust. The line between mechanical precision and adaptive behavior blurs.

Metrics in Motion

Traditional performance metrics—contrast ratio, brightness, color gamut—are no longer sufficient descriptors. AI introduces variability that’s context-dependent, meaning numbers on a spec sheet tell only a fraction of the story. An 8K LED wall might technically have a higher native resolution than a 4K installation, but if the 4K system predicts environmental lighting more effectively, it can appear sharper and more vivid. Data-driven performance has overtaken static specifications.

This makes benchmarking tricky. Standardized tests can’t account for AI’s perceptual adjustments. A panel might deliberately reduce brightness in one section to enhance a visual narrative elsewhere, confusing automated meters. Engineers must now combine quantitative assessment with observational testing. It’s an uncomfortable but necessary evolution: the display is no longer just a machine; it’s a system with emergent behavior.

The Implicit Feedback Loop

Perhaps the most underappreciated shift is the feedback loop between the display and its environment. Sensors capture ambient light, temperature, motion, and even audience density. AI interprets these streams to continuously optimize output. A concert venue can host multiple shows in a single day, each with vastly different lighting requirements, without an operator lifting a finger.

This isn’t simply convenience—it changes design philosophy. Panels can be positioned with more creative freedom, no longer shackled to uniform illumination zones. Walls of LEDs can curve, twist, or stagger, knowing the AI will reconcile inconsistencies automatically. It’s a liberation from the traditional orthodoxy of display deployment. The technology doesn’t just support expression; it subtly redefines what expression can be.

Shadows of Oversight

There are pitfalls. Algorithms are trained on historical data, which introduces assumptions that may not hold in novel contexts. A panel may overcompensate for perceived glare in a new environment, flattening critical highlights. AI can make intelligent mistakes, which are harder to diagnose than a burnt-out diode. Understanding how the model reasons becomes a new kind of literacy—less about schematics, more about data behavior.

This demands a shift in operational mindset. Maintenance teams are increasingly analysts of algorithmic output rather than technicians of circuits. Diagnostic tools must reveal not just voltage or current but inference pathways. The challenge is partly cultural: engineers are comfortable with deterministic systems, and AI doesn’t operate in absolutes. It introduces ambiguity in a space that previously rewarded precision.

Scaling Complexity

The AI revolution also scales the complexity of installation logistics. Individual panels are no longer independent; they are nodes in a cognitive network. Deploying a large-scale installation is now partly an exercise in data architecture. Communication latency, bandwidth, and synchronization take on new importance because predictive models rely on coherent, real-time inputs. Misaligned sensors or inconsistent network traffic can introduce artifacts invisible to raw spec sheets.

Yet this complexity comes with opportunity. Large-scale curved or irregular structures, once considered impractical, are increasingly feasible. AI-mediated blending and tiling handle irregular geometry seamlessly. The panels may be the same physical modules used a decade ago, but their behavior in a networked, intelligent system makes them fundamentally different. It’s as if the physical hardware has been reimagined through software cognition.

The Quiet Revolution in Perception

Ultimately, the most profound change may be psychological. AI-enabled displays interact with human perception in ways that designers are still discovering. Small adjustments, too subtle to notice consciously, influence attention, retention, and emotional impact. Display operators may find themselves questioning whether the audience sees what was intended—or what the system has decided is optimal. This is not dystopia; it’s a subtle, pervasive shift in agency.

There’s a tension here: technology is both enabler and editor. The display is no longer a passive conduit. It nudges the observer, shaping experience imperceptibly. For an industry long obsessed with resolution, refresh, and color gamut, this is a new axis entirely. The panel’s physical specifications matter less than its ability to respond, predict, and adapt.

A Landscape Redrawn

Looking across modern installations—from stadium screens to urban facades—one can see the evidence of AI’s quiet hand. Walls that once demanded precise environmental control now thrive in chaos. Content appears more coherent, colors more stable, shadows less harsh. Engineers are observing a new interplay between algorithmic agency and physical limitations. What once felt like incremental progress now reads as a subtle redesign of what a display can be.

And yet, it’s still a work in progress. Models misread data. Sensors drift. Neural networks sometimes misinterpret novelty. But even in these moments of imperfection, there’s a new rhythm. The wall is no longer a wall; it’s an active participant. The gap between display as machine and display as intelligent interface is shrinking, and the boundaries are messy, human, and oddly exhilarating.